Reference Video Skill Overview and Requirements (VSK Echo Show)

Alexa Video Multimodal Reference Software is a modular project for developing Alexa video skills on Echo Show devices. Video skills refer to Alexa skills that can play movies, television, or episodic content. Multimodal is a term for Echo Show devices which offer both voice and screen-based experiences. Setting up the reference project provides the fastest route to get started with developing Alexa video skills on Echo Show devices. Follow the guides under this section to install, build, deploy this reference video skill, and learn about how it works. In this way, you will be able to use the reference software as a basis for your own projects.

- Alexa Video Multimodal Reference Software

- Command-line Interface (CLI) Tool

- Requirements

- CloudFormation Stack Structure

- Quickstart Implementation Steps

- Next Steps

Alexa Video Multimodal Reference Software

The main components of a video skill include a Lambda function, a web player, and a skill manifest. Similar to other Alexa skills, the Lambda functions as the skill's backend. The web player acts as the skill's front end, and runs on an Echo Show device. It renders video as well as shows UI controls such as a play and a pause button. Similar to other Alexa skills, the video skill manifest is a JSON file that describes your skill.

This project contains a web player, a Lambda, and, also, the Alexa Video Infrastructure CLI Tool.

The CLI tool automates the processes of creating and updating video skills using the reference web player and the Lambda. Although you can build and manage your own web player and Lambda without using the CLI tool, this process is highly recommended for users who want to quickly have a fully functional video skill.

Command-line Interface (CLI) Tool

The reference video skill is an open source project with various components. Most importantly, it includes the command-line interface (CLI) tool which runs on your macOS terminal or Windows PowerShell. The CLI tool uses AWS CloudFormation to provision a collection of AWS resources, so that you spend less time managing these resources, and more time focusing on developing your video skill. To get started, you have to manually prepare your Amazon developer accounts (AWS, Alexa and Login with Amazon services) for setting up a new video skill, and then use the CLI tool to input instances of the data related to your settings.

By using the CLI tool, most parts of the installation, build, and deploy processes of the reference video skill are automated, so you don't need to worry about setting up every resource and service. However, at this point, you might want to revisit this glossary to follow along.

Requirements

Before you proceed, your computer environment must have the following:

- Node.js 10.x. Note: Currently, this is the only supported version. Test your installed version with this command:

node --version. - npm (prebuilt in Node.js). Note: Currently, Node.js supports every npm version between 5.6.0 and 6.11.3. Test your npm version with:

npm --version. Usenpm install -g npm@versionto downgrade your npm, if needed. - Python 2.7 or later (required by some installation packages)

- Firefox. Note: Firefox is necessary during deployment of the video skill. Although you don't need to open it, having it is a requirement for conducting automatically generated unit tests against the web player of your video skill.

- PowerShell (Windows) or terminal (macOS)

You also need access to the following:

- Mobile phone or a tablet with Alexa App installed

- An Echo Show device

- AWS Developer Account

- Alexa Developer Account

Other Dependencies

Check your computer environment against the configurations listed here.

Windows users: Through an elevated PowerShell or CMD.exe (run as Administrator), you must install all the required tools and configurations using Microsoft windows-build-tools with this command:

npm install --global --production windows-build-tools

macOS users: You might need to install Xcode and the Xcode Command Line Tools by running xcode-select --install on a terminal.

CloudFormation Stack Structure

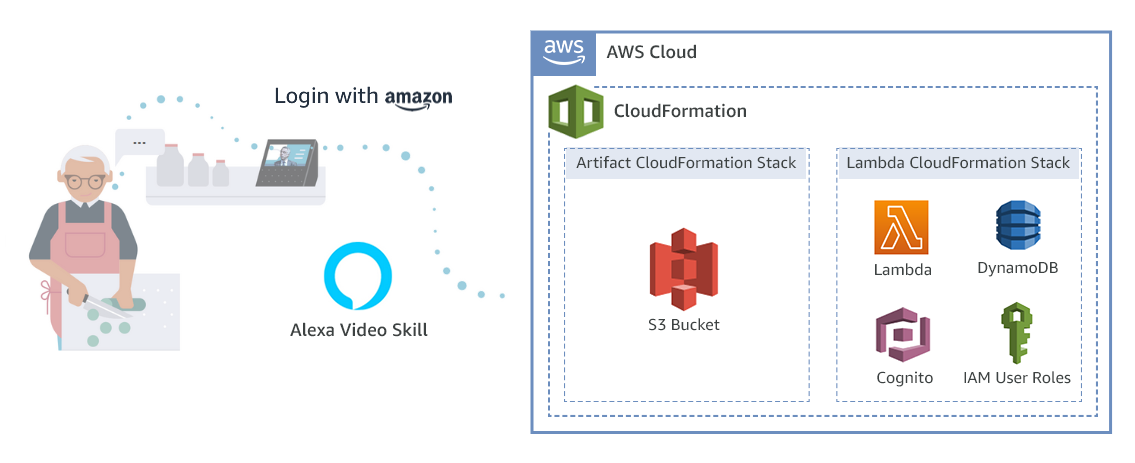

The CLI tool builds two stacks: the Artifact CloudFormation Stack and the Lambda CloudFormation Stack.

It contains an S3 bucket that hosts the following:

- Built Lambda (as a zip file)

- Sample video content

- Built web player (as a website)

When the AWS Lambda is provisioned as part of the Lambda CloudFormation stack, its source code originates from the Lambda zip file located in the S3 bucket.

This stack creates the AWS Lambda, AWS Cognito resources used for account linking, and DynamoDB tables used to store nextTokens for pagination and tracking video progress by supporting "continue watching" functionality. It also provisions various IAM roles to support the skill.

The video skill itself is created using public REST APIs outside of the CloudFormation stacks.

How It Works

AWS Lambda source code originates from the Lambda zip file in the S3 bucket. The skill is created using publicly available REST APIs.

Quickstart Implementation Steps

The process for implementing the reference video skills is broken out into the following steps:

Next Steps

Before jumping into the implementation, see Terminology and Components to get oriented with potentially new terminology.

Then proceed to Step 1: Configure Developer Account Settings.

Last updated: Oct 29, 2020