Accessibility Guide for Web Apps

For more extensive accessibility guidelines for Vega Web Apps, see WCAG (minimum AA), and WAI-ARIA. WCAG covers all the aspects of accessibility.

The goal of accessibility is to deliver a TV app that supports users with the following types of disabilities:

- Visual impairments: from color blindness to complete blindness.

- Mobility limitations: from reduced dexterity to gesture difficulties.

- Speech variations: from accented to nonverbal communication.

- Cognitive challenges: from learning disabilities to having difficulty with complex instructions.

- Hearing loss: from partial to complete deafness.

By addressing these areas through inclusive design, such as screen readers, simplified controls, alternative input methods, clear interfaces, and captions, developers create TV experiences that enable all users to navigate and enjoy content independently.

Vega WebView integrates with Vega OS APIs to provide HTML5 standard accessibility features, so TV apps support users with diverse disabilities. While Vega OS provides the foundational accessibility infrastructure, app developers must implement proper focus management within their web apps, so each interactive element can be programmatically accessed and navigated using remote controls or assistive technologies.

Developers must add appropriate descriptions, labels, and action attributes to web UI components so screen readers can announce content meaningfully, and users can understand the purpose of each element. This includes setting ARIA attributes, maintaining logical tab order, implementing focus trapping in modals, restoring focus after interactions, and providing visible focus indicators that clearly show the current navigation position. In frameworks like VueJS, ReactJS, React Native, React Native for Vega, and AngularJS, write using JSX or HTML-like templates.

For more information, see ReactJS accessibility Guide, or Angular Accessibility Guide.

Accessibility guidelines

Use the following guidelines to implement accessibility.

Semantic HTML structure

- Use meaningful HTML tags. For example, use semantic HTML tags like

<header>,<footer>, and<nav>. - For buttons, use the

<button>element instead of<div>or<span>. - For links, use

<a>instead of non-semantic elements. - Use a hierarchical heading structure: Maintain ordered headings in your app, from

<h1>to<h6>. Hierarchical headings helps screen readers and other assistive technologies understand the content hierarchy, which improves overall navigation.

The system recognizes these semantic tags and assistive technologies, such as VoiceView, Screen Magnifier, and TextBanner, and handles most accessibility automatically when used correctly.

Accessible Rich Internet Application (ARIA) tags

Developers may need non-semantic elements, such as custom components. Custom components might include tiles, cards, or carousels. In such cases, use ARIA attributes to communicate the purpose and behavior to assistive technologies.

ARIA attributes help provide three key types of information:

- What the element is. Example: role="button".

- What it does. Example: aria-pressed, or aria-expanded.

- Additional context or labels. Example: aria-label, or aria-describedby.

For a list of ARIA tags, properties, and methods you can use, see the ARIA Authoring Practices Guide.

Here is an example of ARIA usage.

<section aria-labelledby="features-title">

<h2 id="features-title">Accessibility Features</h2>

<p>This section describes how to make your content accessible.</p>

</section>

Alt or image and icon descriptions

VoiceView doesn't by itself understand images or graphics. You must provide an alt or meaningful description. For example, for a settings icon, use a description like "settings" rather than "an image of a settings icon" or "an icon." VoiceView will already add "an image…" or "link image…".

Here's an example of a settings alt tag.

<img src="" alt="Settings">

- Decorative images: Skip the alt tag for purely decorative images if there is no user value.

- Repeating text: Don't repeat text already written if it's also in the alt tag or aria-label.

Focus management

Focus to an element should be visible and clear. Follow the WCAG guidelines. If you're using semantic HTML tags (<button>, and <a>), by default you'll see the focus ring, or you can customize according to your app's design. VoiceView will identify and read it out aloud. The following are some focus management guidelines.

- Initial focus: Use the React/Angular

autoFocusprop. - Non-semantic tags: If you use these tags, write custom JavaScript code to apply focus, and a custom style

onFocus. tabindex: Only use for the following use cases.- You're making a non-focusable element focusable.

- You're customizing the focus order, which affects modals, and custom components. Here's an example: ```html

Focusable div using tabindex// tabindex makes an element focuable ```

- Letter spacing: Keep it consistent and avoid overly tight or loose spacing.

- All-caps: Avoid all-caps, especially for long sentences because it's harder to read.

- Line height: Use at least

1.5(or150%) to improve legibility as per WCAG. - Typography: Maintain consistent typography.

Programmatic announcements

Developers who build software without tags or who don't announce information programmatically have a couple of options. The following options are useful for web apps that use canvas to draw charts, diagrams, interactive navigation, or 3D world elements.

Option 1: Use <div> for announcements so you can update announcement text when you want to

Here's an example:

<!-- index.html -->

<div id="announcer" aria-live="assertive" aria-atomic="true"></div>

<script>

const announcerDiv = document.getElementById("announcer");

// Simulate an update

setTimeout(() => {

announcerDiv.textContent = "New Announcement!";

}, 5000);

</script>

Option 2: Use postMessage to send a message to the Vega WebView container and handle the message using onMessage

In onMessage, invoke AccessibilityInfo.announceForAccessibility();. It takes a little more setup but operates similarly to how SpeechSynthesis works.

SpeechSynthesis is not currently supported by the WebView component. It's possible to create an implementation using postMessage and onMessage to an extent. There are some behaviors that cannot currently be duplicated with AccessibilityInfo.In this example, a type-value message pattern is used much like when using a JSON API or socket data industry standards. When messages are sent or received, it's easier to serialize and deserialize different data types. In the following code, the top script is the web app, while the bottom is the Vega Web App code.

Web app example:

<!-- index.html -->

<script>

const message = JSON.stringify({

type: "announcement",

value: "New Announcement!"

});

// use postMessage to send data to Vega Web App.

window.ReactNativeWebView.postMessage(message);

</script>

Vega Web App

// App.tsx

import { AccessibilityInfo } from "@amazon-devices/react-native-kepler";

export const App = () => {

// implement to return value if the type is "announcement"

const getAnnouncement = (message: string) => {};

return (

<WebView

source={{ uri: 'https://amazon.com' }}

// use onMessage to receive data from postMessage

onMessage={(event: WebViewMessageEvent) => {

const announcement = getAnnouncement(event.nativeEvent.data);

// announce

AccessibilityInfo.announceForAccessibility(announcement);

}}

>

</WebView>

);

};

Typography and color

Here are some guidelines for typography and color.

- Font sizes must meet readability criteria.

- The color contrast ratio must be ≥ 4.5:1 for normal text and ≥ 3:1 for large text. As per WCAG. See the Contrast Checker at https://webaim.org/resources/contrastchecker/.

- Visual focus should use a different color than the background.

- The font family should be easy to understand. Avoid using too cursive or light.

- Line height should make the content easy to read.

This is only a partial list. There are many more accessibility guidelines for typography and color.

VoiceView

Test with VoiceView to avoid redundant announcements, maintain a correct reading order, and enable live updates (aria-live).

Test playback, Alexa, and other audio behavior.

To hide non-interactive content from the accessibility API, use aria-hidden="true". Check the MDN docs for more information. Here are some examples of non-interactive content.

- Purely decorative content, such as icons or images

- Duplicated content, such as repeated text

- Offscreen or collapsed content, such as menus

Accessibility features in Vega WebView

- TV Remote (DPAD, Back, Select)

- VoiceView (screen reader)

- Magnifier (screen zoom)

-

Captions/Subtitles

Enabling accessibility elements in WebView

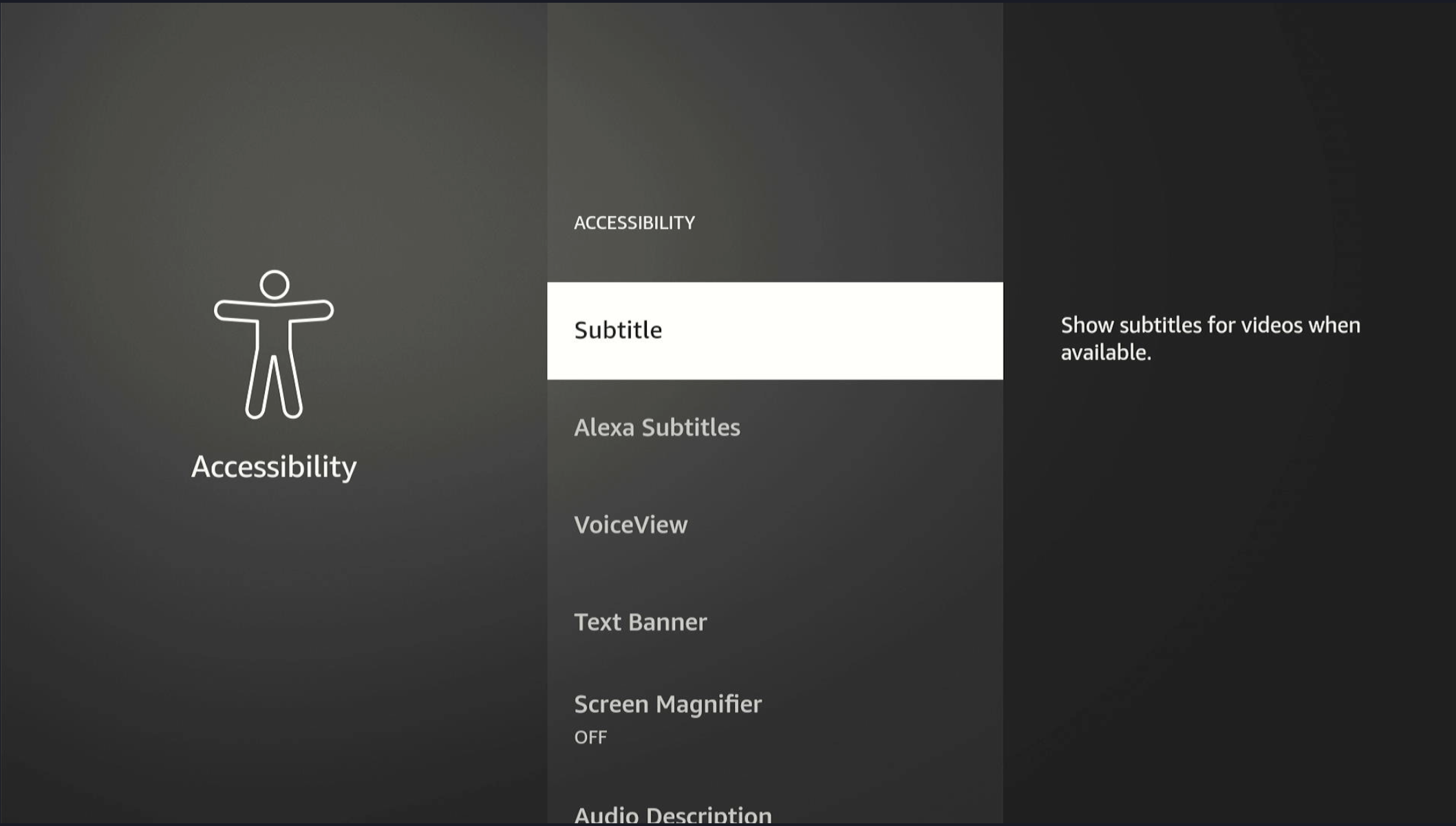

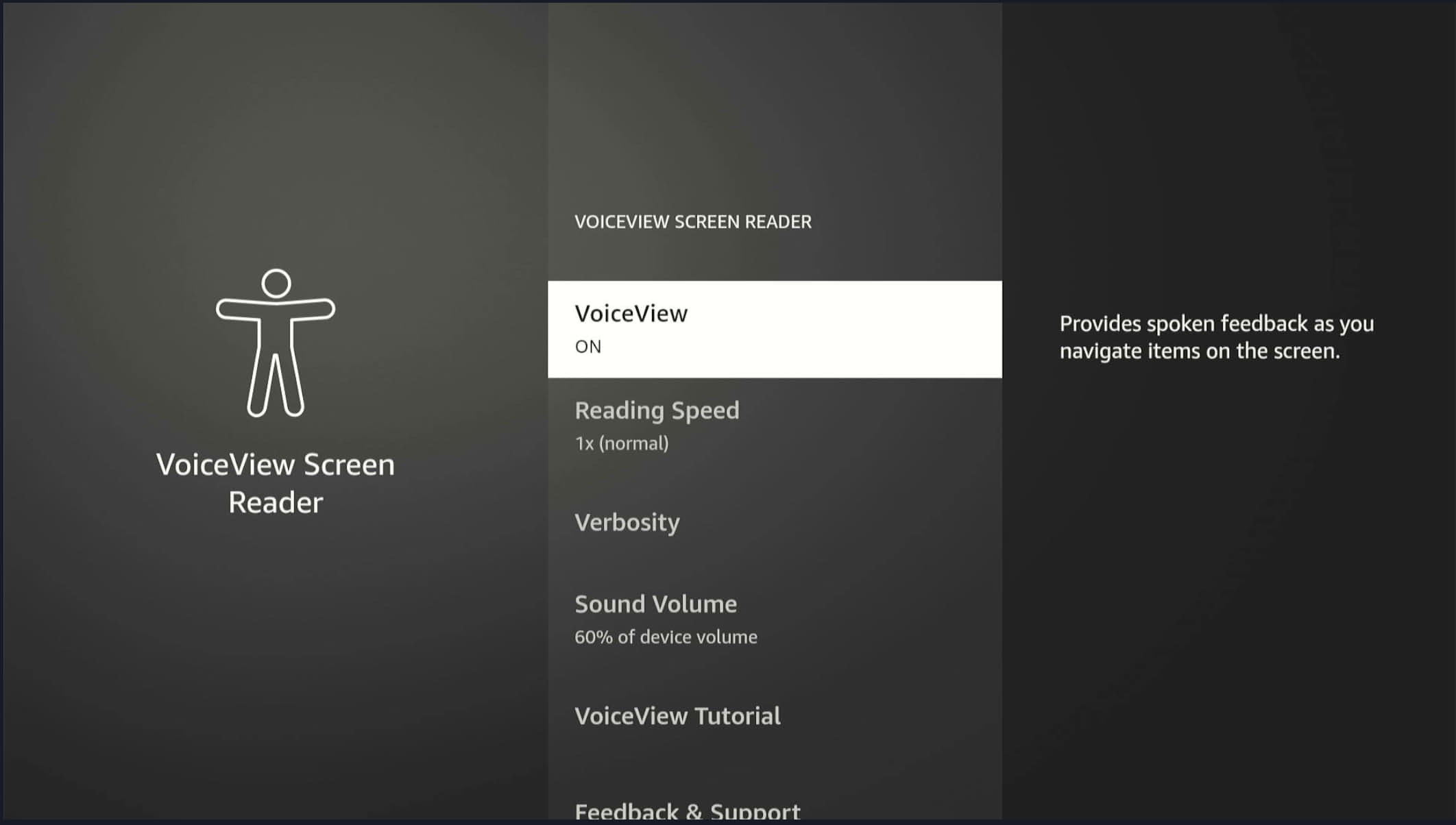

Enable VoiceView

Navigating on the screen

- Navigate to Accessibility Settings.

- Toggle VoiceView ON.

Using the Fire TV remote

- Hold down the Back and Menu keys for 2 seconds.

Using the command line

vega device shell

vdcm set "com.amazon.devconf/system/accessibility/VoiceViewEnabled" "ENABLED"

Disable VoiceView

- Navigate to Accessibility Settings.

- Toggle VoiceView OFF.

Hide elements for VoiceView

To hide VoiceView elements, use the following.

aria-hidden="true"

See ARIA: aria-hidden attribute for more information.

Notify VoiceView of an element when the default accessible name is missing

Use the following so that VoiceView knows about the element.

<button aria-label="Close dialog">X</button>

Announcing dynamic values

HTML, ReactJS, and Angular use aria-live. For more details, see ARIA: aria-live attribute. Use the following to announce dynamic values.

<div aria-live="polite" id="status"> <!- Text inserted here will be announced --></div>

See ARIA: aria-live attribute for more information.

Test cases

Here are some minimum test cases to verify your app's accessibility.

VoiceView

VoiceView can be enabled and disabled

- Navigate to Accessibility Settings.

- Toggle VoiceView ON/OFF.

The app works with VoiceView enabled

- Enable VoiceView.

- Navigate through the app using your remote.

- Verify voice prompts for each UI element.

Text is spoken on a new screen

On a new screen, the header, description, usage hints, orientation text, and currently focused item are spoken.

- Navigate to different app sections (For example, Home, Settings, Content).

- Confirm VoiceView output.

Navigation and spoken elements work

Make sure a user can navigate (using directional pad/select/quick select actions on buttons) to all actionable elements. The focused element must be spoken in full, and static text for that element must be spoken in Normal Mode.

Focused element is spoken after a pause

You should hear spoken Usage Hints, Orientation Text, Described By, and Static Text after a short pause (~0.5 seconds).

Images and icons include descriptive text

If images provide instructions, describe all instructions in the alt-text so VoiceView will speak.

VoiceView works in Review Mode

Hold menu for 2 seconds with VoiceView on. The user should be able to navigate (Left/Right on directional pad) to all text and controls, with change in granularity (Up/Down on directional pad). User must be able to read non-actionable text and items in Review Mode.

Accessibility gestures work

For instance, double-click to activate an item, or long press.

Magnification (screen zoom)

Magnification can be enabled and disabled

- Go to Accessibility.

- Enable Magnifier.

- Verify it zooms in/out.

Zoom functions across the UI

- Try zooming text, buttons, images.

- Confirm proper scaling and visibility.

Zoom preserves readability and doesn't clip content

- Zoom in/out on all screens.

- Verify that the layout is unchanged and that scroll works.

1.5x zoom works for the 1st time

- Go to settings.

- Make sure zoom is off.

- Activate zoom.

- Observe zoom and app behavior.

Zoom preserves according to the user's preferences after the 1st visit

- Go to settings.

- Make sure zoom is off.

- Activate zoom.

- Observe zoom and app behavior.

- Close the app.

- Reopen the app or power on the device again.

- Verify the zoom level is preserved from before.

Magnifier works on focus

Make sure the user can navigate (using directional pad/select) to actionable controls and that the magnifier follows the focus.

All text and controls can be accessed (using menu+directional pad to "pan")

Subtitles / captions

Subtitles can be enabled and disabled

- Play video.

- Toggle subtitles in player/settings.

- Confirm visibility.

Closed Captioning styles work

Use CC Settings in your Local player while on the Global setting.

- Change subtitle settings.

- Verify UI updates accordingly.

Subtitles persist across sessions (if applicable)

- Enable.

- Exit app.

- Reopen.

- Check if the setting persists.

Subtitles are accurate and sync with audio

- Play content.

- Confirm subtitles are in sync and accurate.

The user gets accurate Closed Captions

Make sure your captions only have a short delay and that they follow the content.

Font / text size

Text size increases and decreases

- Access font settings.

- Adjust size.

- Verify text scales correctly across UI.

Font changes remain across the app

- Change font size.

- Navigate to different screens.

- Confirm consistency.

Layout doesn't break with a large font

- Set maximum font size.

- Verify no UI clipping or overlap.

Focus / visual indicator

Every actionable element has a visible focus ring

- Use remote to navigate through all UI.

- Check the focus ring on each element.

App has a consistent focus order (logical navigation flow)

- Navigate using the remote.

- Confirm intuitive focus order (top-to-bottom, left-to-right).

Optional: Audio feedback on focus change

- Enable audio cues.

- Navigate.

- Confirm audible indication on focus change.

Focus isn't lost after user action, such as on modal close

- Open and close modals or dialogs.

- The focus returns to the expected element.

Navigation

Navigating with the remote is accessible

- Use D-pad, Back, Select buttons.

- Verify all areas are accessible without touch input.

Keyboard navigation works correctly (if supported)

- Test with an external keyboard or accessibility input device.

- Verify all interactive areas.

No trap zones (can navigate away from all areas)

- Try entering/exiting modals, menus, and carousels.

- Verify there are no dead ends.

Latency

Accessible metadata loads as part of a standard payload package

The user receives a visual and audible notification that the content is loading on screen

The user isn't blocked if the connection times out

Verify they aren't blocked even if they are offline.

Accessibility by design

Images and icons include descriptive text

If images provide instructions, add these instructions to the alt-text for VoiceView to speak.

The status communicates through multiple means

Communication means might be text, color, and sound.

All icons have a text description

Even commonly used icons need text descriptions.

Troubleshooting WebView accessibility

VoiceView doesn't speak any items when it's enabled

Check the accessibility markup.

A UI element reads as a button

This behavior occurs when UI elements don't have accessibility labels or descriptions, but they have accessibility roles set as button.

UI elements are different from what's visually displayed

This difference may be because the app didn't properly set the focused element for accessibility.

VoiceView speaks the title or UI element more than once

Repeated spoken items may be due to duplicate A11Y announcements. Remove all duplicate A11Y announcements.

Video playback results in continuous reading

Continuous reading may be from an incorrectly used label. The focus is on content details instead of the video player component, which has no text inside it. The app should only focus on the element/node when it's visually on the screen during video playback.

Audio ducking isn't working

Your media player may not be properly integrated with the platform audio focus system.

Related topics

- Overview of Vega Web Apps

- Vega WebView Component Reference

- Assistive Technologies for Vega

- Accessibility

Last updated: May 20, 2026