Alexa Conversations Guided Development Experience

• GA:

en-US, en-AU, en-CA, en-IN, en-GB, de-DE, ja-JP, es-ES, es-US• Beta:

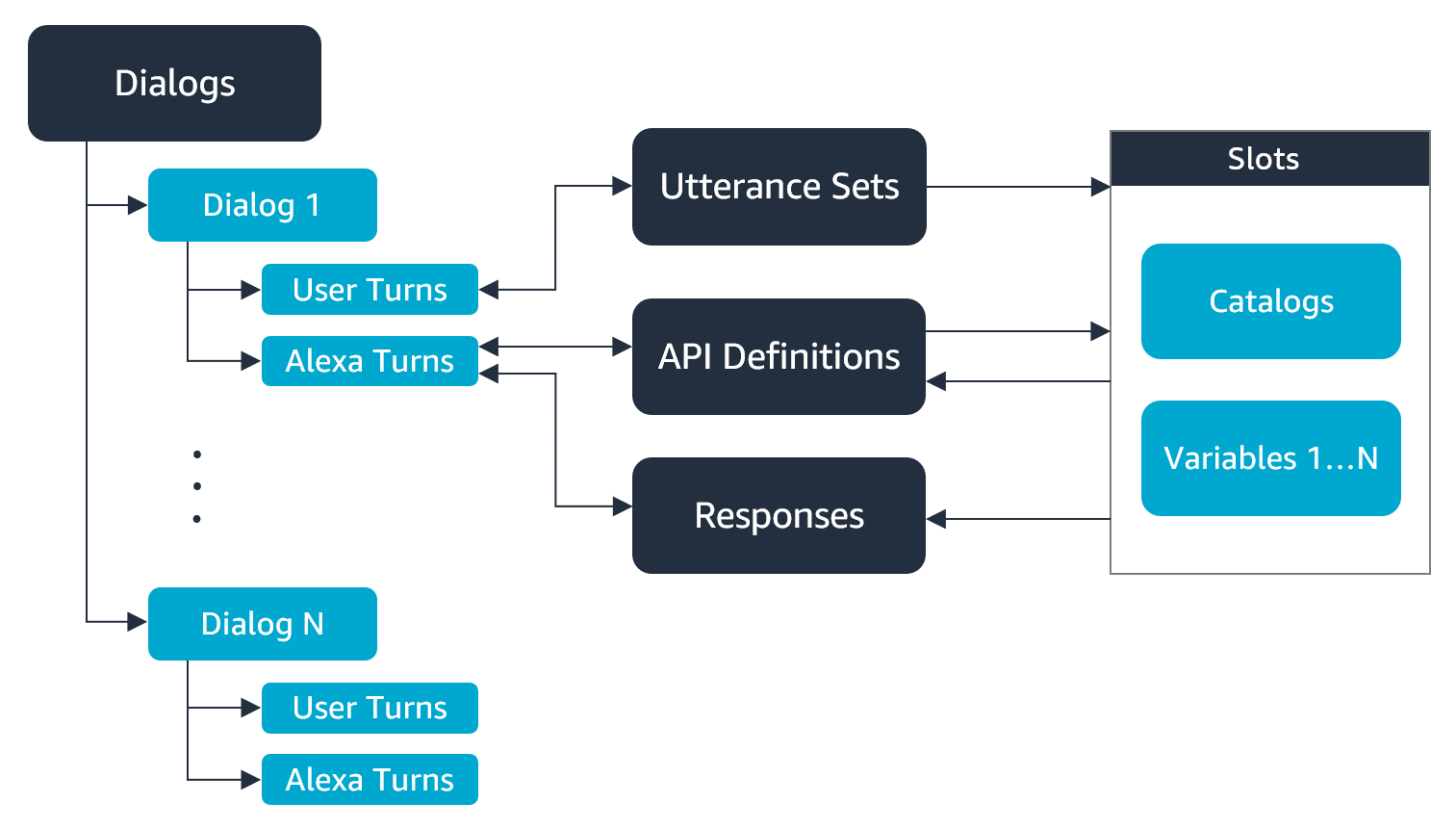

it-IT, fr-CA, fr-FR, pt-BR, es-MX, ar-SA, hi-INYou can develop Alexa Conversations skills by using a guided experience in the developer console. During the process, you provide sample dialogs in the dialog editor, and then annotate the sample dialogs with dialog acts, utterance sets, and responses that contain audio and visual elements. You also specify when to invoke APIs and which arguments to use so the dialog management model can gather the information to trigger your skill code.

Alexa Conversations components

When you develop an Alexa Conversations skill by using the developer console, you create the following components that train Alexa Conversations how to interact with your user.

| Component | Description |

|---|---|

|

Dialogs |

Dialogs are sample conversations between the skill and Alexa. |

|

Utterance sets |

Utterance sets are sample variations in how a user might say a response or request. |

|

API definitions |

API definitions represent requests that your skill handles and the corresponding responses that your skill returns to Alexa Conversations. |

|

Responses |

Responses include audio and visual elements that Alexa uses to respond to the user. |

|

Slot types |

All variables that pass between user utterances, Alexa responses, and APIs must have a slot type. As with intent-based interaction models, slot types define how Alexa recognizes, handles, and passes data between components. For details, see Use Slot Types in Alexa Conversations. |

|

Dialog variables |

Dialog variables are instances of a slot type, provided by the user or an API response and used for dialog state, business logic, or response content. |

|

Dialog acts |

Dialog acts are tags that indicate the purpose of each interaction in a dialog to describe what is happening at a specific point in a conversation. Dialog acts train the conversational AI. |

Introduction to dialog acts

A key task in Alexa Conversations skill development is to label each turn of your sample conversations with a dialog act. Dialog acts represent the purpose of the utterance. For a full list of dialog acts, see Dialog Act Reference for Alexa Conversations. The following example shows the dialog act associated with the turns of a dialog for a weather skill.

User: What's the weather? (Dialog act: Invoke APIs)

Alexa: What city? (Dialog act: Request Args)

User: Seattle. (Dialog act: Inform Args)

Alexa: What date? (Dialog act: Request Args)

User: Today. (Dialog act: Inform Args)

Alexa: Are you sure you want the weather for Seattle today? (Dialog act: Confirm API)

User: Yes. (Dialog act: Affirm)

Alexa: The weather in Seattle for today is 70 degrees. (Dialog act: API Success)

Keep in mind that if Alexa doesn't have all the required information, the dialog act might not happen right away. For example, the dialog act associated with a user's request, "I want to order a pizza," is to order a pizza (that is, to invoke an API in your skill code that places a pizza order). However, Alexa doesn't have all the information — such as the size and toppings — that your API needs to fulfill the request. Alexa therefore asks the user for the required information in a flexible, natural-sounding way. Alexa asks as many times as necessary for the user to provide the pieces of information that your API needs. Only then does Alexa invoke your skill code. Alexa Conversations AI controls the rest of the conversation.

The flow of dialog acts within a dialog must meet certain guidelines. For the supported dialog act flows, see Work with Dialog Acts in Alexa Conversations. For details about all dialog acts, see Dialog Act Reference for Alexa Conversations.

Related topics

- Get Started With Alexa Conversations

- How Alexa Conversations Works

- Steps to Create a Skill with Alexa Conversations

- Design for Natural Speech

Last updated: Nov 27, 2023